Forbidden Space AI: The Deepfake Crisis Surrounding Scarlett Johansson

What would you do if a hyper-realistic, sexually explicit video of you—created without your consent using artificial intelligence—suddenly appeared online, viewed millions of times? This isn't a hypothetical scenario from a cyberpunk novel; it's the alarming reality facing celebrities like Scarlett Johansson and countless private individuals. The recent surfacing of a deepfake video falsely depicting the actress has ignited a firestorm, pushing her to issue one of her strongest warnings yet about the unregulated dangers of AI. This incident is not isolated. It’s part of a growing epidemic of non-consensual deepfake pornography that is exposing catastrophic gaps in our legal systems and demanding urgent, global legislative action. From a UK broadcaster facing potential criminal charges to a Hollywood A-lister calling for U.S. federal law, the battle against synthetic media abuse has reached a critical juncture.

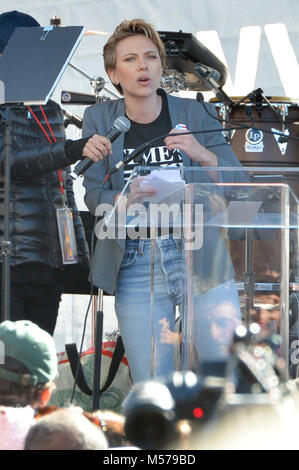

Understanding the Crisis: Who is Scarlett Johansson?

Before diving into the deepfake controversy, it’s essential to understand the figure at its center. Scarlett Johansson is one of Hollywood's most recognizable and highest-grossing actresses, known for her roles in blockbuster franchises like the Marvel Cinematic Universe (as Black Widow) and films such as Lost in Translation and Her.

| Personal Detail | Information |

|---|---|

| Full Name | Scarlett Ingrid Johansson |

| Date of Birth | November 22, 1984 |

| Place of Birth | New York City, New York, U.S. |

| Profession | Actress, Singer |

| Notable Awards | BAFTA Award, Tony Award, Academy Award Nominee |

| Marital Status | Married (to Colin Jost, 2020-present) |

| Children | 2 (a daughter and a son) |

| Estimated Net Worth | ~$165 million (2023) |

Her status as a global celebrity makes her a prime target for digital identity theft, but her decision to publicly fight back transforms her from a victim into a pivotal advocate for change.

- Exclusive Kenzie Anne Xxx Sex Tape Uncovered Must See

- Viral Alert Xxl Mag Xxls Massive Leak What Theyre Hiding From You

- What Does Roof Maxx Really Cost The Answer Is Leaking Everywhere

The Spark: A Deepfake Video Surfaces

The current crisis was ignited when a fabricated video featuring Scarlett Johansson’s likeness superimposed onto an adult film star’s body was discovered circulating online. This is not a simple case of photo manipulation; it is a sophisticated deepfake—an AI-generated synthetic media where a person’s face is seamlessly mapped onto another’s body in a video, creating a convincing forgery.

Johansson’s representatives confirmed the video’s existence and its malicious nature. In a statement to People magazine, she expressed her frustration and alarm, noting that this is not the first time her image has been misused in this way. The specific video in question has reportedly garnered over 1.5 million views on one of the many websites dedicated to hosting such non-consensual content. The sheer volume of views underscores the massive distribution reach these violations achieve before they can be taken down, if they ever are.

The Scale of the Problem: A Pervasive Epidemic

Johansson’s experience is the tip of an enormous iceberg. The amount of pornographic deepfake videos that exist are numerous, with dedicated websites like celebdeepfakes.net and niceporn.tv (which brands itself as "the largest porn video site") hosting thousands of such videos targeting hundreds of celebrities. These platforms often use provocative titles like "Watch Scarlett Johansson interracial sex tape at audition leaked celebrity fake porn" or "Scarlett Johansson honeymoon sex tape" to lure viewers, blatantly advertising the synthetic nature of the content while simultaneously trafficking in the violation.

- Breaking Exxon New Orleans Exposed This Changes Everything

- What Does Supercalifragilisticexpialidocious Mean The Answer Will Blow Your Mind

- Shocking Vanessa Phoenix Leak Uncensored Nude Photos And Sex Videos Exposed

These videos are not harmless pranks. They are a form of digital sexual assault and image-based sexual abuse. The psychological harm to victims is profound, involving feelings of violation, humiliation, and powerlessness. The commercial exploitation of their likeness without consent is a direct attack on personal autonomy and dignity.

Legal Loopholes and the UK Precedent: Channel 4's Critical Error

While Johansson focuses her call to action on the United States, a parallel legal drama in the United Kingdom provides a stark case study of how existing laws can be applied—or fail to be applied—to this new technology. Channel 4 in the UK is facing legal criticism after airing a segment in its documentary Vicky Pattison that inadvertently highlighted the very vulnerabilities it sought to expose.

The documentary was intended to demonstrate how easily women’s faces can be swapped onto pornographic videos. However, in doing so, it ended up potentially violating the Sexual Offences Act 2003. The legislation, specifically Section 66A, makes it a criminal offense to disclose private sexual images of someone without consent to cause distress. By broadcasting fabricated, sexually explicit imagery—even for a journalistic purpose—the network may have crossed a legal line, as the act of disclosure itself, regardless of intent, can constitute an offense if it causes alarm or distress.

This incident proves that current legal frameworks, while not designed for AI, can be weaponized against perpetrators, including media organizations that mishandle sensitive synthetic content. It sets a precedent that the creation and distribution of deepfake pornography are not just ethical violations but potential crimes.

Johansson's Call to Arms: Demanding Federal Legislation

Reacting to the latest violation and the broader landscape, Hollywood actress Scarlett Johansson has once again raised alarm over the dangers of artificial intelligence, calling for stricter laws to regulate deepfake content. Her statement is clear and forceful: Scarlett Johansson is calling on the government to pass a law limiting the use of AI after a video featuring an AI deepfake of the actress circulated online.

She is specifically calling for the U.S. to enact comprehensive federal legislation. Currently, the legal response in the United States is a patchwork of state laws. Some states, like California, Texas, and Virginia, have passed laws against non-consensual deepfake pornography, but there is no nationwide statute. Johansson argues that a federal law is essential because:

- Jurisdictional Nightmares: Deepfakes hosted on servers overseas are difficult to prosecute under state laws.

- Uniform Standards: A federal law would create a consistent definition of the crime and uniform penalties.

- Platform Accountability: It could impose clearer responsibilities on tech companies and websites to proactively detect, remove, and prevent the upload of such material.

Her advocacy highlights a crucial point: AI technology has far outpaced the legal protections for individual privacy and image rights. The law must catch up to prevent synthetic media from becoming a tool for widespread harassment, blackmail, and reputational destruction.

The Technical and Social Mechanics of the "Forbidden Space"

The term "Forbidden Space" aptly describes the digital frontier where AI ethics, law, and personal rights collide. Deepfake technology, initially developed for legitimate uses in film (de-aging actors, resurrecting deceased performers with consent), has been weaponized. The process typically involves:

- Data Harvesting: Collecting thousands of images/videos of the target (easily sourced from social media, red carpet events, films).

- Model Training: Using machine learning algorithms (like Generative Adversarial Networks or GANs) to analyze and learn the target’s facial features, expressions, and mannerisms.

- Face-Swapping: Applying the trained model to a source video, replacing the original face with the target’s likeness in real-time.

- Distribution: Uploading the finished product to forums, social media, and dedicated porn sites, often with SEO-optimized titles to maximize views.

The social impact is devastating. It erodes trust in visual media ("seeing is no longer believing"), disproportionately targets women and girls, and can be used for disinformation, blackmail, and to silence journalists and activists. Although the documentary was supposed to highlight how vulnerable women are to having their likeness misused, it ended up potentially violating the sexual offences act 2003 with images of—this irony perfectly encapsulates the systemic failure.

What Can Be Done? Practical Steps and Solutions

While legislative change is the ultimate goal, individuals and platforms must act now.

For Individuals:

- Reverse Image Search: Regularly search for your own images online using tools like Google Reverse Image Search to detect unauthorized use.

- Digital Literacy: Be skeptical of sensational videos, especially those from unverified sources. Look for inconsistencies in lighting, blinking, or audio.

- Report Aggressively: Immediately report deepfake content to the hosting platform (using their abuse/terms of violation tools) and to law enforcement if you are the direct victim.

- Legal Counsel: Consult with a lawyer specializing in privacy law or cyber harassment. Many states with deepfake laws allow for civil suits.

For Tech Companies & Platforms:

- Proactive Detection: Invest in and deploy AI tools specifically designed to detect deepfakes and synthetic media before they are widely shared.

- Swift Takedown Policies: Implement clear, enforced policies against non-consensual intimate imagery, including deepfakes, with rapid response teams.

- Transparency Reports: Publicly report the volume of deepfake removal requests and actions taken.

- User Empowerment: Provide easy-to-use, prominent reporting mechanisms for victims.

For Policymakers:

- Define the Crime Clearly: Legislation must explicitly criminalize the creation and distribution of non-consensual deepfake pornography, with enhanced penalties for intent to harass or profit.

- Hold Platforms Liable: Create a "duty of care" standard for platforms, similar to approaches in the EU's Digital Services Act, making them responsible for mitigating systemic risks like deepfake abuse.

- Fund Research: Support the development of detection technology and digital forensics tools for law enforcement.

- Public Education: Fund campaigns to raise awareness about deepfakes and available legal recourses.

Conclusion: The Imperative for a Legal and Ethical Framework

The surfacing of a "Forbidden Space AI sex tape" featuring Scarlett Johansson is a clarion call. It exposes a technological revolution that has left our legal and ethical frameworks in the dust. From the UK's Channel 4 inadvertently testing the bounds of the Sexual Offences Act to Johansson's passionate plea for U.S. federal intervention, the message is unanimous: the status quo is unacceptable.

The proliferation of sites like celebdeepfakes.net and the millions of views amassed by these violations demonstrate a lucrative, low-risk ecosystem for perpetrators. Scarlett Johansson sextape porn and nude photos from the fappening celebrity scandal online—these search terms are not just keywords; they represent a continuous, targeted assault on personal autonomy in the digital age.

Johansson’s stance is pivotal because she uses her platform to articulate a truth that applies to everyone: your digital likeness is part of your identity, and non-consensual manipulation of that likeness is a violation. The path forward requires a multi-pronged attack: robust, modern laws that recognize synthetic media abuse as a serious crime; technological innovation in detection and prevention; and a cultural shift that rejects the consumption and normalization of such content.

The "forbidden space" of AI abuse will only grow more sophisticated and damaging without decisive action. Scarlett Johansson has drawn a line in the sand. The question for legislators, tech leaders, and society as a whole is whether we will have the courage to enforce it. The protection of personal dignity in the 21st century depends on the answer.