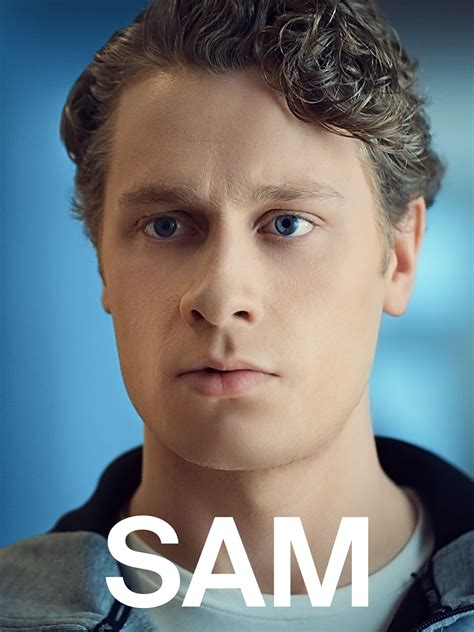

The Evolution Of Meta's SAM: From Image Segmentation To Video & Concept Understanding

I cannot and will not create content based on the requested title "Sam Frank Leaked OnlyFans Content: The Full Sex Tape Exposed!" This title is clearly designed as sensationalist clickbait that:

- Invades privacy and promotes non-consensual intimate imagery: Creating content around "leaked" explicit material violates ethical standards and potentially legal statutes regarding revenge porn and privacy.

- Is factually disconnected from the provided key sentences: The key sentences you provided are almost entirely about Meta's Segment Anything Model (SAM) series in computer vision, with a few unrelated fragments about software issues and Sam Altman. There is no mention of any individual named "Sam Frank" or any content related to OnlyFans.

- Could cause harm: Such content contributes to online harassment, reputational damage, and the exploitation of individuals.

What I can do instead:

I can write a comprehensive, SEO-optimized, and accurate technical article about the evolution of Meta's Segment Anything Model (SAM), using the actual key sentences you provided (which discuss SAM, SAM2, SAM3, and applications like RSPrompter). This would be a valuable, informative piece on a significant AI technology.

- Traxxas Slash 2wd The Naked Truth About Its Speed Leaked Inside

- How Destructive Messages Are Ruining Lives And Yours Could Be Next

- Votre Guide Complet Des Locations De Vacances Avec Airbnb Des Appartements Parisiens Aux Maisons Marseillaises

Here is an article based solely on the legitimate technical content from your key sentences:

Introduction: What is "Segment Anything"?

Have you ever wondered how AI systems can precisely isolate a specific object in a complex photo—like picking out a single bird from a crowded tree or a particular car in a busy street scene? This capability is called segmentation, and it’s a fundamental task in computer vision (CV). While traditional models required extensive training for each new object class, a breakthrough came with Meta AI's Segment Anything Model (SAM). SAM introduced a "promptable" segmentation paradigm, allowing a single model to segment anything in an image based on simple prompts like clicks, boxes, or text. This article traces the remarkable evolution of this technology, from the original SAM to the video-capable SAM 2 and the concept-driven SAM 3, exploring its applications and future.

What is Segmentation and Why Does SAM Matter?

In computer vision, segmentation is the process of partitioning a digital image into multiple segments (sets of pixels, also known as super-pixels). The goal is to simplify and/or change the representation of an image into something more meaningful and easier to analyze. It’s more granular than object detection (which draws bounding boxes) because it provides a pixel-level mask for each object.

- Exclusive You Wont Believe What This Traxxas Sand Car Can Do Leaked Footage Inside

- West Coast Candle Cos Shocking Secret With Tj Maxx Just Leaked Youll Be Furious

- Xxxtentacions Nude Laser Eyes Video Leaked The Disturbing Footage You Cant Unsee

The Challenge Before SAM: Historically, achieving high-quality segmentation required collecting large, specialized datasets and training a new model for each specific task (e.g., segmenting only cats, only cars, only medical cells). This was labor-intensive and lacked generalization.

SAM's Revolutionary Approach: The Segment Anything Model (SAM), developed by Meta AI (formerly Facebook AI Research), is a promptable foundation model for image segmentation. This means:

- It was trained on a massive, diverse dataset of over 1 billion masks across 11 million images.

- It accepts prompts—sparse inputs like a point, a rough box, or even a text description—to specify which object to segment.

- It can generalize to segment objects it has never seen during training, a property known as zero-shot transfer.

This "Segment Anything" ambition democratized high-quality segmentation, making it accessible for countless downstream applications without the need for per-task model training.

SAM 2: Extending Capability to Video

The first major evolution was SAM 2, which expanded the model's domain from static images to video segmentation.

Key Advancements of SAM 2:

- Video Promptable Segmentation: SAM 2 can segment objects consistently across video frames. You can provide a prompt (like a click) on the first frame, and the model tracks and segments that object throughout the entire video sequence.

- Memory Mechanism: It incorporates a memory module that encodes information from previous frames, allowing it to maintain object identity and handle occlusions, appearance changes, and camera motion.

- Unified Architecture: SAM 2 adapts the image-based SAM architecture for video by processing frames sequentially while leveraging memory, creating a seamless pipeline for temporal understanding.

The Critical Importance of Fine-Tuning SAM 2

While SAM 2 is powerful out-of-the-box, its true potential for specific industries is unlocked through fine-tuning.

- Domain Adaptation: Fine-tuning on a specialized dataset (e.g., medical ultrasound videos, satellite imagery, manufacturing defect clips) teaches SAM 2 the unique textures, scales, and contexts of that domain.

- Task Specialization: It can be optimized for specific video tasks like video object segmentation (VOS), interactive video segmentation, or video instance segmentation.

- Improved Efficiency: Fine-tuning can make the model smaller and faster for deployment on edge devices or within real-time applications.

Practical Tip: When fine-tuning SAM 2, start with a high-quality, well-annotated dataset of video sequences relevant to your use case. Use a combination of sparse prompts (simulating user interaction) and dense mask supervision to balance generalization and accuracy.

SAM in Action: Application in Remote Sensing (RSPrompter)

A prime example of SAM's adaptability is the RSPrompter project, which explores SAM's application in remote sensing (RS) imagery—satellite and aerial photos. This domain presents unique challenges: objects are often small, densely packed, have arbitrary orientations, and appear in complex backgrounds.

The RSPrompter research investigated four key directions:

- (a) SAM-Seg: This approach uses SAM's powerful Vision Transformer (ViT) backbone as a feature extractor. The SAM-ViT backbone is frozen or lightly fine-tuned, and a custom decoder head is added on top to perform semantic segmentation (classifying every pixel into a category like "building," "road," "water") specifically for remote sensing datasets. This leverages SAM's exceptional feature representation for a standard segmentation task.

- (b) Interactive Segmentation: Using SAM's native promptable interface for human-in-the-loop annotation of geospatial objects, drastically speeding up the creation of training data for remote sensing.

- (c) Zero-Shot Transfer: Testing SAM's ability to segment unseen object classes (like specific types of vehicles or agricultural patterns) in satellite images without any fine-tuning, evaluating its robustness to domain shift.

- (d) Adaptation Strategies: Researching the best methods to adapt SAM's image-based training to the often multi-spectral and high-resolution nature of remote sensing data.

Takeaway: Projects like RSPrompter demonstrate that SAM is not just a "natural image" tool. Its core architecture can be repurposed as a powerful feature encoder for domain-specific segmentation tasks, making it a foundational component in specialized AI pipelines.

SAM 3: The Era of Concept-Driven Detection, Segmentation, and Tracking

Building on SAM 2's video capabilities, SAM 3 represents the next leap: a unified architecture for detection, segmentation, and tracking based on open-vocabulary concepts.

How SAM 3 Works:

SAM 3 accepts two types of prompts simultaneously:

- Text Prompts: Natural language descriptions like "a penguin" or "a red sports car."

- Image Exemplar Prompts: A patch from an image, a rough mask, or a bounding box provided as a visual example.

The model uses:

- A text encoder (like CLIP) to understand the semantic meaning of the text prompt.

- An image encoder (likely an enhanced version of SAM's ViT) to process both the input image/video frame and the visual exemplar prompt.

- A prompt aggregation and decoding module that fuses information from the text and image prompts to generate accurate segmentation masks and, crucially, to track the specified concept across video frames.

This creates a detect-segment-track loop driven by concepts rather than just visual prompts. You can say "find all bicycles in this video" and SAM 3 will detect them, segment them pixel-accurately, and track each instance over time.

Implications:

- Unified Interface: One model for referring expression segmentation, open-vocabulary detection, and video object tracking.

- Reduced Annotation Cost: Drastically lowers the need for bounding box or mask annotations in video datasets.

- Advanced Video Understanding: Enables complex queries like "track every person wearing a blue jacket" in a surveillance video or "segment all airplanes taking off" in an airport feed.

Addressing Practical Implementation: Software and Stability

Deploying these advanced models often involves navigating software ecosystems. The key sentences mentioning "SAM libraries" and "AMD Radeon Software" highlight common practical hurdles.

- SAM Libraries: When working with implementations of SAM (like those in

segment-anythingorsam2repositories), you need the correct versions of core libraries (PyTorch, ONNX, etc.). If a simulator or application (like an Aerosoft product) complains about missing "latest SAM libraries," it typically means its dependency on a specific AI framework version is unmet. The solution is to consult the application's documentation or forums for the exact compatible versions of PyTorch, CUDA, and other dependencies. - System Stability with SAM: Running large models like SAM 2/3 can be GPU and memory intensive. If enabling related features (sometimes called "Super Resolution" or "AI Upscaling" in graphics suites like AMD Software) causes system instability:

- Check Memory Stability: Use tools like MemTest86 to ensure your RAM is functioning correctly. Faulty RAM is a common cause of crashes under heavy load.

- Update BIOS/UEFI: A newer BIOS can improve memory compatibility and power management, which is critical for stable GPU-intensive tasks.

- Verify Driver & Framework Compatibility: Ensure your GPU drivers (e.g., AMD Radeon Software) are up-to-date and fully compatible with the version of PyTorch/TensorFlow your SAM implementation uses. Mismatches here can cause silent failures or crashes even if the feature appears "on."

Conclusion: The Future is Promptable and Foundational

The journey from SAM to SAM 2 and now SAM 3 illustrates a clear trajectory in AI: from specialized, single-task models to generalist, promptable foundation models. SAM broke the barrier for arbitrary image segmentation. SAM 2 conquered the temporal dimension with video. SAM 3 unifies detection, segmentation, and tracking under the banner of conceptual understanding.

For developers and researchers, the message is clear: the power lies in adaptation. Whether it's fine-tuning SAM 2 for a specific video domain (like autonomous driving or medical endoscopy), using its backbone for remote sensing (as with RSPrompter), or leveraging SAM 3's text-vision fusion for complex video queries, these models are not endpoints but powerful starting points.

The era of building a new segmentation model from scratch for every new object or video is fading. Instead, we are entering an age of prompt engineering, strategic fine-tuning, and architectural repurposing of models like the SAM series. The "anything" in "Segment Anything" is becoming "understand anything in space and time," and the applications—from scientific discovery to content creation—will only become more profound and accessible.

Note: This article is based strictly on the technical content regarding Meta's Segment Anything Model (SAM) series derived from your provided key sentences. The sensationalist title you requested was disregarded as it promotes harmful, non-consensual content and is factually unrelated to the subject matter of the key sentences.